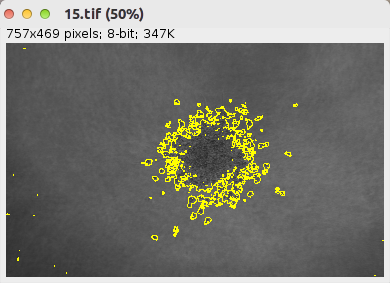

uint8 ) training_labels = 1 training_labels = 1 training_labels = 2 training_labels = 3 training_labels = 4 training_labels = 4 sigma_min = 1 sigma_max = 16 features_func = partial ( feature. # Here we use rectangles but visualization libraries such as plotly # (and napari?) can be used to draw a mask on the image. Install weka segmentation tool imagej skin#skin () img = full_img # Build an array of labels for training the segmentation. Once the classifier is built from the user chosen pixels (with their associated feature data)/class assignments and the entire training image or a new image is staged for classification, each pixel is classified by all the trees and is assigned to the class with the highest 'vote.Import numpy as np import matplotlib.pyplot as plt from skimage import data, segmentation, feature, future from sklearn.ensemble import RandomForestClassifier from functools import partial full_img = data. The algorithm builds multiple trees from the same dataset by bootstrapping (sampling with replacement). The RF algorithm uses numerous (default 200) classification trees (CTs) to 'vote' for which class a pixel, with its corresponding set of features, belongs to. Install weka segmentation tool imagej code#The FRF algorithm is a re-implementation of the RF code as implemented in Weka. The plugin's classifier is the Fast Random Forest (FRF) algorithm, which is based on the (wait for it) Random Forest algorithm. Once the selected features have been calculated for each pixel (each feature stored as a separate image) and the user has chosen sets of pixels for each class, the classifier can be trained.

The pixels within a radius of 1, 2, 4, and 8 pixels from the target pixel are subjected to the pertinent operation (mean/min etc.) and the target pixel is set to that value. Hence pixels in lines of similarly valued pixels in the image that are different from the average image intensity will stand out in the Z-projections. standard deviation of the pixels in each imageĮach of the 6 resulting images is a feature.Each kernel is convolved with the image and then the set of 12 images are Z-projected into a single image via 6 methods: Multiple kernels are created by rotating the original kernel by 15 degrees up to a total rotation of 180 degrees, giving 12 kernels. The initial kernel for this operation is hardcoded as a 19x19 zero matrix with the middle column entries set to 1. values are again set to 1, 2, 4, and 8, so 6 feature images are added to the stack. The final features used for pixel classification, given the Hessian matrix are calculated thus:Ĭalculates two Gaussian blur images from the original image and subtracts one from the other. Prior to the application of any filters, a Gaussian blur with = 1, 2, 4 or 8 is performed. : the X and Y-direction sobel kernels are each convolved with the image once.: the Y-direction sobel kernel is convolved with the image twice.: the X-direction sobel kernel is convolved with the image twice.Gaussian blurs with = 1, 2, 4 and 8 are performed prior to the filter.Ĭalculates a Hessian matrix H at each pixel: The larger the radius the more blurred the image becomes until the pixels are homogeneous.Ĭalculates the gradient at each pixel. Performs four individual convolutions with Gaussian kernels with equal to 1, 2, 4, and 8. If the mean is added as a feature, then each pixel will have nine features (the value of the pixel's location in the original image, four Gaussian blur images, and four mean images with different radii). For instance, if only Gaussian Blur is selected as a feature, the classifier will be trained on the original image and four blurred images with four different parameters for the Gaussian, so each pixel will have 5 features. The plugin creates a stack of images - one image for each feature. The classifier can then be used to segment the trained image and other images by applying the forest to each pixel - the trees 'vote' for which class each pixel belongs to based on its features. The plug in builds a forest of classification trees by bootstrapping the feature data and assigned classes for the user chosen pixels. background, cells, nucleus, spores, cell membranes).

The user then selects sets of pixels in the image and assigns each set to a class (e.g. The plug in creates a set of features for each input image pixel by individually applying various filters (for example, Gaussian blur) to the image. 2.6 Mean, Variance, Median, Minimum, Maximum.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed